Sam Altman's Endgame

On OpenAI, legal cartels, and the end of AI safety

A few different notes and ideas this week about the interface between AI, power, and the law. And I’m big mad about a stupid law being floated in NY.

OpenAI’s obvious endgame: becoming a GSE

OpenAI raised $110B in the largest private fundraise in history. Same day Trump threatened to kill Anthropic and OpenAI stepped over the body to take the Pentagon contract (with some equivocating).

This is one event.

Foundation models are obviously incredible products but might be terrible businesses.

Training is a cost of revenue, not an R&D cost. Stop training, everyone churns and moves on to your competitor or a distilled Chinese version. The labs are perpetually investing unbelievable sums in building these fast depreciating assets (capex and training). And each new SOTA model costs more than the last; we’re rapidly approaching >$1B training runs.

There’s only so many places to get $100B. The largest IPO in history, Saudi Aramco, was “just” $30B. The capital markets are running out of road. Retail and public markets can’t easily absorb or finance the build-out.

The natural next dollars are from the U.S. government. The only parts of the economy that are growing are the AI capex trade and the great national nursing home. Without AI capex we would be in a recession and the Trump admin can’t take that risk. The DoD contract fight was a preview for truly picking winners and Chinese style market intervention.

The endgame: OpenAI will become a GSE/SOE, at least in fact if not in law. The mechanism – equity, debt, guarantees, some weird structure – is unclear and unimportant.

It’s very likely the taxpayer ends up as the bagholder.

Legal AI Is Pro Consumer

New York is considering a law banning chatbots from doing anything that might be construed as legal work (SB S7263).

Banning AI legal advice is stupid and anti-consumer.

Obviously, there is the potential for consumer harm in chatbots providing legal advice, but the benefits far outweigh the risk. The biggest benefit, very clearly, is leveling the playing field.

All the lawyers are obviously gonna have AI, and the potential for lawfare and flooding the zone with abusive lawsuits is extraordinarily high. Contracts are gonna get longer. Legal fights will get more complicated. We’re all gonna be drowning in paper because of AI.

If the lawyers are the only people that can use legal AI, then only the people with lawyers on standby and large legal budgets will have access to those tools by proxy.

Without legal AI for consumers, we’ll see the most massive re-weighting of the American legal system towards landlords, corporations, and employers in history. Consumers and small businesses will suffer massively when they are going up against a constant barrage of frivolous lawsuits, long contracts, and punitive agreements.

We are moving to an increasingly hostile legal environment, and consumers need access to good tools to have a chance.

This law being proposed in New York is reflective of the legal cartels’ scarcity mindset and anti-competitive position.

Clawd - Dean W Bell

Dean Bell was an AI advisor to Trump and writes an extremely convincing, and terribly bleak, essay about how the fight between Trump and Anthropic represents an acceleration in the death of the American project/republican.

The gist of it is that Congress has become so calcified and incapable that lawmaking now happens on an almost entirely arbitrary executive basis with no recourse. That power is awesome and terrifying and is now wielded by people too stupid and reckless to take it seriously, let alone operate with constraints:

Even if Secretary Hegseth backs down and narrows his extremely broad threat against Anthropic, great damage has been done. Even in the narrowest supply-chain risk designation, the government has still said that they will treat you like a foreign adversary—indeed, they will treat you in some ways worse than a foreign adversary—simply for refusing to capitulate to their terms of business. Simply for having different ideas, expressing those ideas in speech, and actualizing that speech in decisions about how to deploy and not deploy one’s property. Each of these things is fundamental to our republic, and each was assaulted—not anything like for the first time but nonetheless in novel ways—by the Department of War last week. Most corporations, political actors, and others will have to operate under the assumption that the logic of the tribe will now reign.

There is something deeper about the damage done by the government, too. The Anthropic-DoW skirmish is the first major public debate that is truly about where the proper locus of control over frontier AI should be. Our public institutions behaved erratically, maliciously, and without strategic clarity. Our political leaders conveyed little understanding of their own actions, to say nothing of the technology and its stakes. They got off on an extraordinarily bad footing, and it is hard to imagine them ever recovering, because they do not seem to care about improvement. They are a cartoonish depiction of the American political elite, but sadly their failings have been the prototype of American political elites from both parties for much of my life now. “The same as before, but now noticeably worse” has been the theme of American politics for 20 years.

As I have said many times before, neither liberal democracy nor capitalism works without strong property rights. Trump doesn’t respect either.

AI Safety Has 12 Months Left - Michael Dempsey

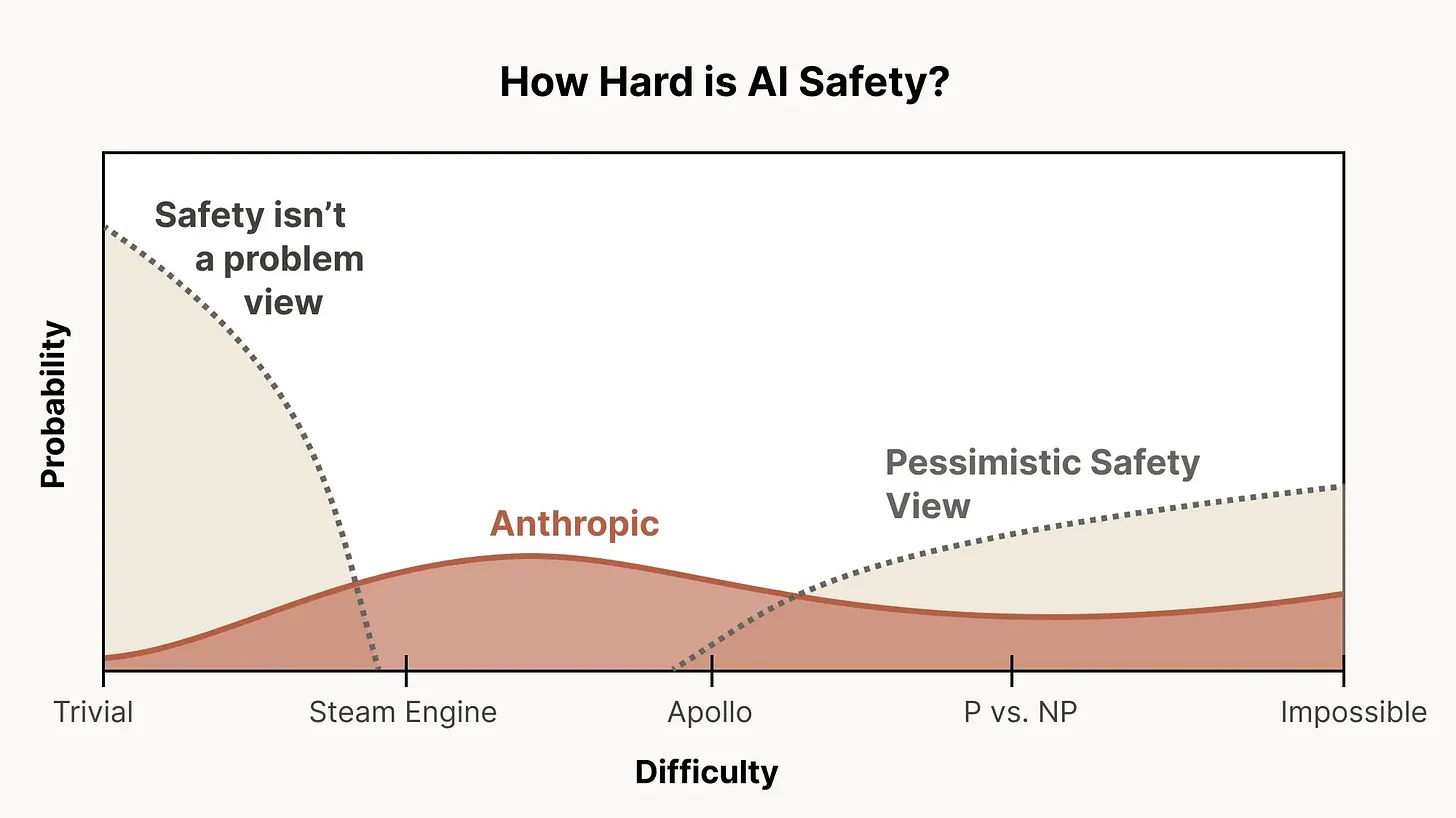

Dempsey with a good summary and POV of how dire things are for any prospect of safety being a serious thing which matters to anyone beyond the EA cult.

There is roughly a twelve-month window to embed [safety] into technical and social infrastructure before IPOs and competitive dynamics make it permanently impossible. Every mechanism that currently constrains how labs behave besides compute is either already dead or expiring, and what gets built in that window has to survive years of market pressure on the other side. The people who care about this have a rapidly shrinking amount of leverage to work with but perhaps in the past ~week have been handed a specific opportunity to reclaim some level of importance or opportunity.

I’ve long wavered on the idea of AI safety because I’m not a believer in fast takeoff superintelligent AGI - Skynet is not on my radar of threats. But it seems clear that there is increasingly nothing governing the use of AI but technical limitations and Dario’s benevolence.

Hiring

I’ve been helping a number of portfolio companies with hiring, which has been really fun. In talking to these founders about their hiring plans and talking to the candidates about what they want, it’s become very clear that the paradigm of good early-stage startup employees has shifted significantly and rapidly over the past few years.

The best early stage hires are radically different from a few years ago: multi-hyphenate, commercial generalists.

The engineers want to talk to customers and the business people write code.

High agency and AI native instead of heads down 10x performers.

Some exciting roles/companies to join:

That's depressing especially when you consider all the snippets together as a whole. Dean Ball worked in the Admin and knows what he's talking about. Can we get back to ways I can build defensibility into my commoditizing AI business? That's a much better way to end the week.